|

9/4/2023 0 Comments Torch cross entropy loss

Specifying either of those two args will override reduction. Note: size_averageĪnd reduce are in the process of being deprecated, and in the meantime, 'mean': the sum of the output will be divided by the number ofĮlements in the output, 'sum': the output will be summed.

Reduction ( str, optional) – Specifies the reduction to apply to the output: When reduce is False, returns a loss perīatch element instead and ignores size_average. Losses are averaged or summed over observations for each minibatch depending Reduce ( bool, optional) – Deprecated (see reduction). Is set to False, the losses are instead summed for each minibatch. Some losses, there multiple elements per sample. It is the cross entropy loss when there are. Note, pytorch’s CrossEntropyLoss does not accept a one-hot-encoded target you have to use integer class labels instead. Binary Cross-Entropy loss is a special case of Cross-Entropy loss used for multilabel classification (taggers). The losses are averaged over each loss element in the batch. CrossEntropyLoss supports what it calls the K-dimensional case. Size_average ( bool, optional) – Deprecated (see reduction). If provided it’s repeated to match input tensor shape

Weight ( Tensor, optional) – a manual rescaling weight Target ( Tensor) – Tensor of the same shape as input with values between 0 and 1.

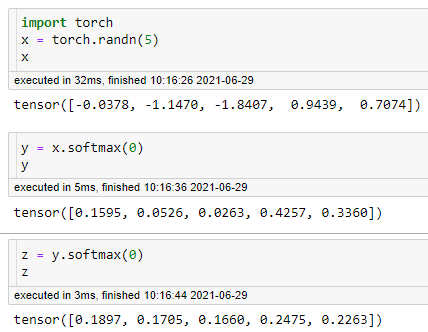

Input ( Tensor) – Tensor of arbitrary shape as probabilities. binary_cross_entropy ( input, target, weight = None, size_average = None, reduce = None, reduction = 'mean' ) ¶įunction that measures the Binary Cross Entropy between the target and input Torch.nn.functional.binary_cross_entropy ¶ torch.nn.functional. target ( Tensor) Ground truth class indices or class probabilities see Shape section below for supported shapes. Parameters: input ( Tensor) Predicted unnormalized logits see Shape section below for supported shapes. Extending torch.func with autograd.Function This criterion computes the cross entropy loss between input logits and target.CPU threading and TorchScript inference breaks down how crossentropy function (corresponding to CrossEntropyLoss used.CUDA Automatic Mixed Precision examples This loss function computes the difference between two probability distributions for a provided.Logit = (1-gt_tensor) * a + gt_tensor * bįocal_loss = - (1-logit) ** gamma * torch.log(logit)įocal loss is also used quite frequently so here it is. Using the functions defined above, def manual_focal_loss(pred_tensor, gt_tensor, gamma, epsilon = 1e-8): torch.nn.functional.crossentropy(input, target, weightNone, sizeaverageNone, ignoreindex- 100, reduceNone, reductionmean, labelsmoothing0. The epsilon value will be limiting the original logit value’s minimum value. The above binary cross entropy calculation will try to avoid any NaN occurrences due to excessively small logits when calculating torch.log which should return a very large negative number which may be too big to process resulting in NaN. If you are using torch 1.6, you can change refactor the logit_sanitation function with the updated torch.max function. CrossEntropyLoss (weight None, sizeaverage None, ignoreindex -100, reduce None, reduction mean, labelsmoothing 0.0) source ¶ This criterion computes the cross entropy loss between input logits and target. However, in 1.4 this feature is not yet supported and that is why I had to unsqueeze, concatenate and then apply torch.max in the above snippet. Loss = - ( (1- gt_tensor) * torch.log(a) + gt_tensor * torch.log(b))Ĭurrently, torch 1.6 is out there and according to the pytorch docs, the torch.max function can receive two tensors and return element-wise max values. Limit = torch.ones_like(unsqueezed_a) * min_valĭef manual_bce_loss(pred_tensor, gt_tensor, epsilon = 1e-8):Ī = logit_sanitation(1-pred_tensor, epsilon)ī = logit_sanitation(pred_tensor, epsilon) At the moment, the code is written for torch 1.4 binary cross entropy loss # using pytorch 1.4

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed